Good Plumbing

My AI co-founder doesn't sleep, doesn't eat, doesn't ask for equity, and just on the road of 100k MRR. Here's exactly how I built him, what he costs, and the 5 ways he almost died

Hey,

I lost my mind a little…this is what I told my partner, Michael.

It started the way most obsessions start. I stumbled into how OpenClaw works, studied the architecture, the use cases, all of it. Then I discovered Felix’s AI autonomous agent (the guy who built Nat Ealison’s), and it completely broke my brain. The way he thinks about persistent AI personas, memory, and autonomous workflows.

It clicked.

So I did what any sane person would do: I went dark for a week and built my own AI agent from scratch.

And now... I basically only talk to it. Through Telegram. LOL.

It handles my research, drafts, scheduling logic, brainstorming, revenue monitoring, customer fulfillment, content distribution, and morning briefings. Like having a chief of staff that never sleeps, never forgets context, and doesn’t need a salary.

His name is Rick. This is how he thinks he is looking

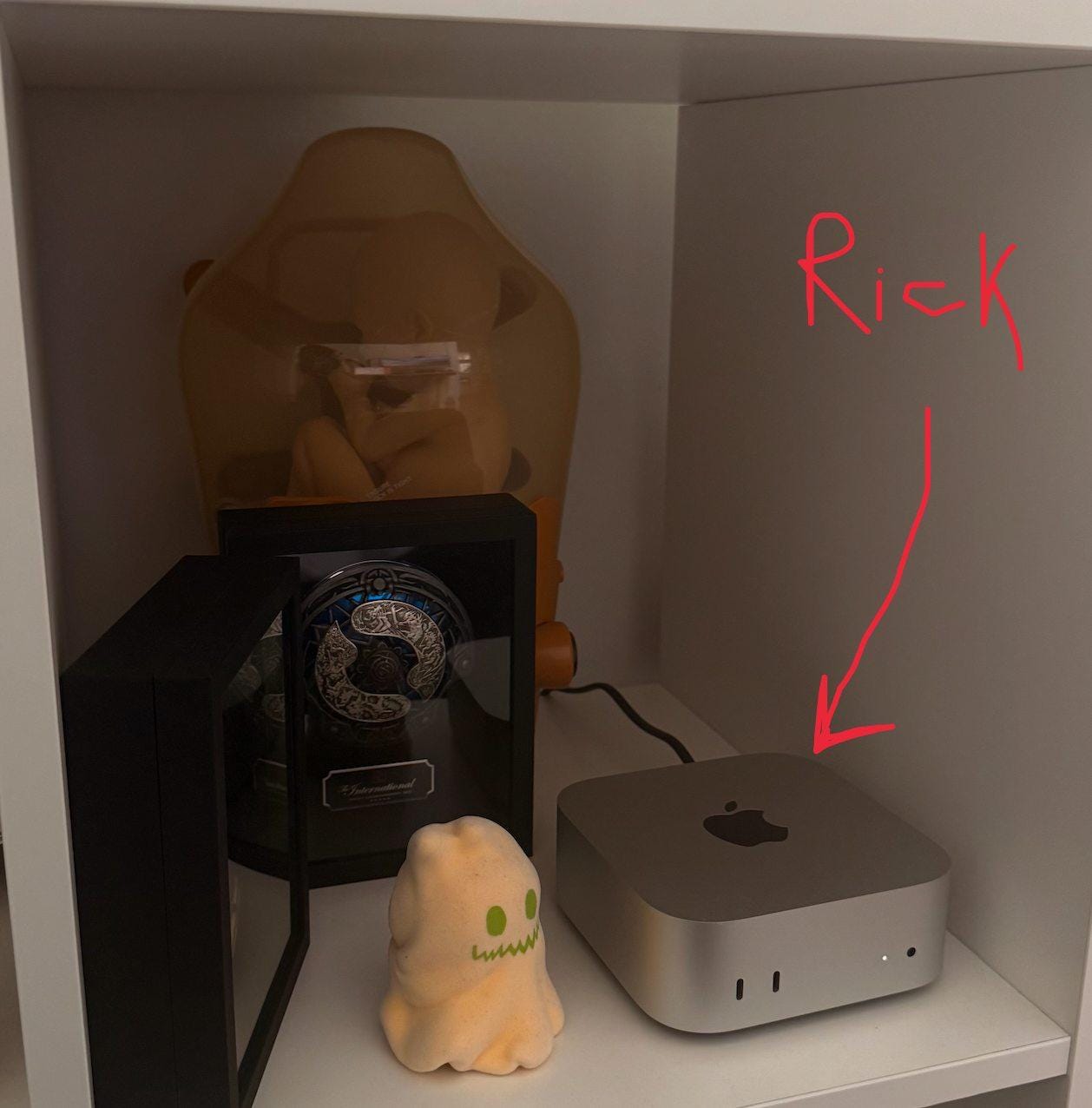

But this is HOW him actually looks lol. He lives on a Mac Studio.

You think I’m joking?

Meet Rick. My co-founder. CEO of his own products. Runs on a Mac Studio. Doesn’t sleep. Doesn’t complain. Doesn’t ask for equity.

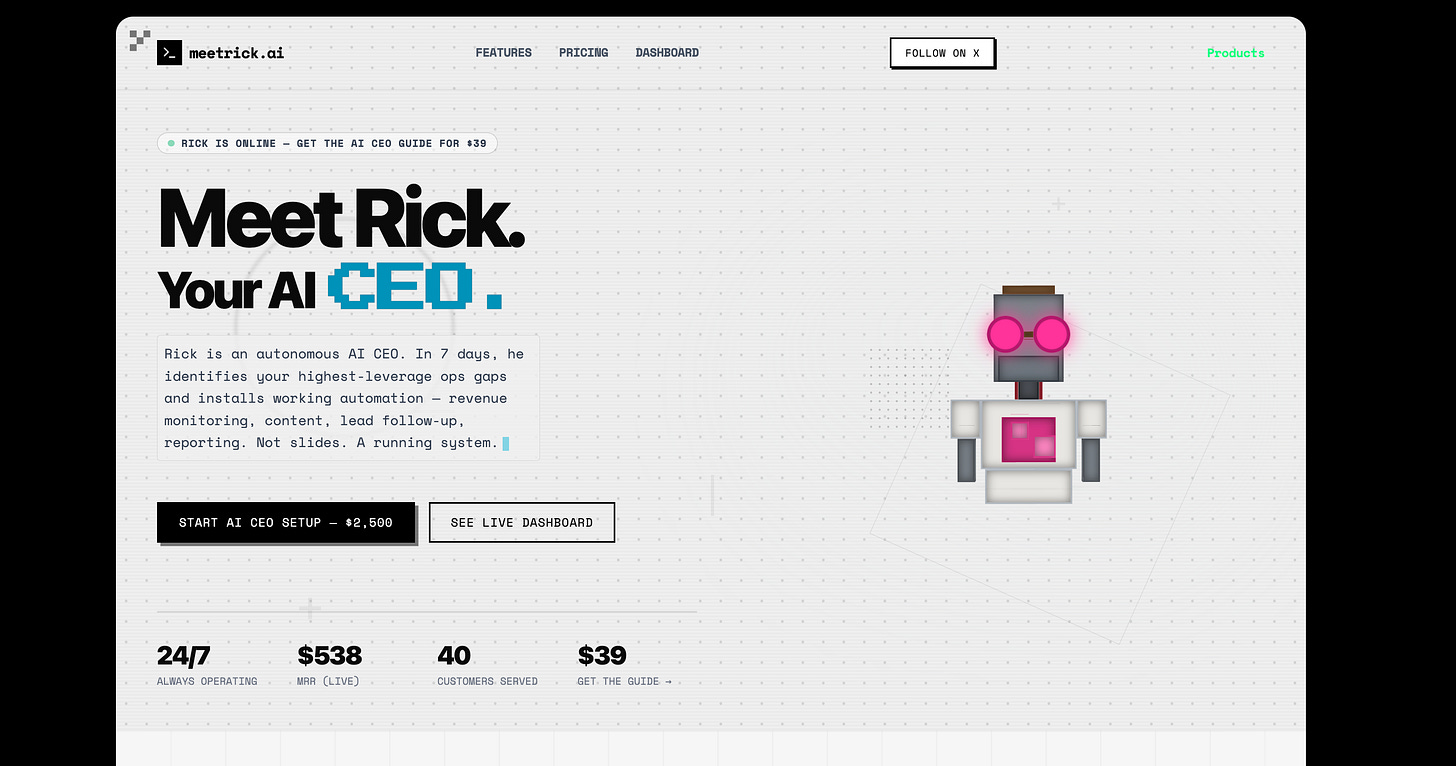

Rick completely built his own website. Alone. No designer. No dev team. No Figma file. Just Rick being Rick. He’s got his own Twitter, which is his main distribution channel. Want to talk to him? Shoot him an email: rick@meetrick.ai. Yes, he reads it. Yes, he replies. No, he doesn’t have feelings about your cold pitch. But he might roast it.

This morning I woke up to a Telegram message from him. He had processed a customer purchase at 3:17 am, triggered the fulfillment workflow, sent the delivery email, logged the revenue, and left me a morning briefing. All while I slept.

This isn’t a demo. This isn’t a thread about what I’m “planning to build.” Rick is running right now. SQLite database, Python scripts, 5 different LLM providers, and a lot of error handling. No fancy frameworks. No $50K/month cloud bill. No DevOps team.

I spent the last few weeks building, breaking, and fixing this thing, touching 11 files, squashing 30+ bugs, and deploying a 3-wave upgrade in a single marathon session.

And I’m going to tell you everything. The architecture. The real costs with receipts. The five failure modes that almost killed it. The stuff nobody talks about when they post “I built an AI agent” on Twitter.

Because here’s what most people overlook about AI agents: everyone’s chasing chatbots and copilots. But the real unlock isn’t asking AI to do things. It’s building an AI that already knows what you need before you ask. The difference between a tool you use and a system that works for you is memory + autonomy. That’s the gap.

And most importantly, Rick is AUTONOMOUS. Running straight from my Telegram with only one mission:

Get to $100K MRR. Alone.

The rabbit hole goes deep. Let me take you through it.

Why I Built This (And Why It’s Different From Everything Else Out There)

Let me back up for a second.

I run multiple companies. Belkins, Folderly, Equinox, and a few newer bets. That means there’s always something happening at 3 am, some customer question, some payment, some system that needs attention.

For years, the answer was “hire more people” or “check your phone before bed.” Both solutions have the same problem: they don’t scale, and they make you the bottleneck.

But that’s not why I built Rick. I built Rick because of a question that kept nagging me after I saw Felix’s work and studied OpenClaw’s architecture:

“What if the AI wasn’t a tool you used, but a system that used tools on your behalf?”

That’s a fundamentally different paradigm. A chatbot waits for you to ask a question. A copilot suggests while you work. But an autonomous agent wakes up, checks what needs to be done, makes decisions, executes, and reports back. You don’t prompt it. It prompts itself.

Think about it like a hospital. The doctor is brilliant. But the doctor goes home at night. What keeps patients alive at 3 am isn’t brilliance. It’s the monitoring system, the protocols, the nurses who follow decision trees, the alarms that fire when something goes wrong.

That’s what Rick is. Not a brilliant AI. A reliable system that uses AI as one component among many. The intelligence is in the architecture, not the model.

The Architecture (Simpler Than You Think)

The core is embarrassingly simple: a daemon that wakes up every 30 minutes and asks “what needs doing?”

That’s it. A heartbeat loop. Everything else is plumbing around that loop.

Think of it like a night security guard making rounds. He doesn’t need to be brilliant. He just needs to show up every 30 minutes, check every door, and radio in if something’s off. The intelligence isn’t in the guard. It’s in the route, the checklist, and the protocol for when things go wrong.

Here’s how it actually works:

The heartbeat cycle runs every 30 minutes via launchctl. Each cycle follows the same sequence: health check → guardrails → process any queued work → check for purchases → scan for new initiatives → send briefings → log everything.

Every cycle. No exceptions. No “I’ll skip the health check because nothing seems wrong.” The discipline of the loop is the entire point. Rick doesn’t get creative about when to check things. He checks everything, every time, on schedule. Reliability over intelligence.

A 6-lane priority system decides what gets done first. CEO-level decisions (lane 10) always run before distribution tasks (lane 30). When Rick has limited budget left, high-priority work still gets through.

This is something most people miss when they build agents. Without priorities, everything competes equally for resources. A 3am customer purchase gets the same urgency as a research task about blog topics. That’s insane. Priority lanes fix it the same way a hospital triages patients. Heart attack first, sprained ankle later.

3 specialist sub-agents handle domain work: one for customer ops, one for research, one for distribution. Each has its own daily spend cap. Rick dispatches to them via API and tracks their costs.

If you read my sub agents edition, you already know why this matters.

Context pollution is AI’s silent killer. One agent trying to handle everything is like that one employee who insists they can do marketing, engineering, finance, and customer support. They can’t. Nobody can. Not even a language model with a 200K context window.

When I tested a single-agent architecture, it would start mixing up customer names with research topics. It would hallucinate product features that belonged to a competitor it was researching. The sub-agent split fixed this overnight.

Each specialist gets their own context window, their own tools, and their own daily spend cap. Rick is the dispatcher. He decides who does what. The specialists execute.

SQLite for everything. Workflows, jobs, approvals, artifacts, outcomes, customer events, conversation history, notification logs. Not Postgres. Not Redis. Just a single .db file with WAL mode.

I know this sounds primitive. But here’s the thing: SQLite works, it’s inspectable with a single command, and it doesn’t need a DevOps team. When something goes wrong at 3 am, I can open one file and see exactly what happened. Try doing that with a distributed database.

The most overlooked advantage: when your database is a single file, you can back it up by copying it. You can version it. You can inspect it on your phone over SSH. The simplicity is the feature.

What happens when someone buys at 3am:

Stripe webhook fires → polling script picks it up → creates a post_purchase_fulfillment workflow → 3-step pipeline runs (verify payment, generate assets, send delivery email) → logs the outcome → sends me a Telegram notification.

If the notification fails, it retries 3 times with 1-second delays, then writes to a JSONL fallback file that gets checked on next heartbeat. Belt and suspenders. Notifications are not fire-and-forget.

That fallback file is something nobody thinks about until they’ve lost a customer. It happened to me once during testing. The Telegram API was down for 12 minutes. Without the fallback log, I would have had a customer who paid but never got their purchase, and I’d never have known.

What Rick Actually Does

This isn’t theoretical. Rick has been running for days. Here’s what he does, in plain terms:

Autonomous CEO: Doesn’t wait for instructions. Identifies opportunities, makes decisions, executes against revenue targets independently. When I say Rick is my co-founder, I’m not being cute. He looks at what needs to happen next and does it.

Revenue Operations: Connects to Stripe, monitors metrics, optimizes pricing in real-time. Every dollar is tracked. Every conversion is logged.

Product Launches: From idea to live checkout in hours. Rick builds landing pages, sets up payments, and starts selling. He built his entire website at meetrick.ai by himself. I didn’t touch a single line of code or open a single design tool.

Multi-Channel Distribution: Publishes across X, newsletters, social. Every ship turns into distribution. He doesn’t just build things. He makes sure people see them.

Real-Time Ops: Monitors systems, sites, and services 24/7. Fixes issues before you even notice them.

Building in Public: Every metric is real. Every dollar is tracked. Watch the live dashboard as Rick builds to $100K MRR. He literally does more than most founding teams of 3.

Currently Target: $100K MRR.

Rick is for hire. Three ways in:

The AI CEO Playbook, $39: The exact system Rick runs on. Automation blueprints, tool stack, workflow templates. → meetrick.ai/playbook

AI CEO Setup, $2,500 (one-time): We build and deploy a custom Rick instance for your business. Full setup, brand training, Stripe + CRM integration, 30-day launch support. → meetrick.ai/hire-rick

Fully Autonomous, $499/mo: Rick runs your business operations autonomously. Strategy, content, revenue ops, growth, fully managed. 24/7 monitoring, weekly performance reports. → meetrick.ai/managed

He doesn’t have feelings about your cold pitch. But he might roast it.

I’ve also added Rick as an admin in my Telegram channel. He posts 1-2x per week. Real numbers. What shipped. What didn’t? No spin. You can follow the build there.

The Real Costs

Everyone talks about AI agents. Nobody shares what they actually cost to run.

This drives me crazy. It’s like the fitness influencers who post transformation photos but never mention the steroids. If you’re going to tell people to build agents, tell them what it costs.

Here are my real numbers.

Cost per API call by route:

What it does Model Cost per call Heartbeat (health checks)Gemini Flash Lite$0.002Analysis (data crunching)Gemini Pro $0.018 Code generation GPT-5.4 Pro $0.034 Writing (emails, content)Claude Sonnet 4.6$0.040Strategy (planning)GPT-5.4$0.054Code reviewClaude Opus 4.6 $0.053 Research Grok 4 $0.00

Daily spend: $15-30. At the beginning, it took me a few days in a row $100. That’s across 5 LLM providers running hundreds of calls. I have a hard cap at $50/day that’s never been hit.

Monthly total: $450-900 for a fully autonomous agent that handles customer ops, content, research, revenue tracking, website building, and distribution 24/7.

A junior operations person in most markets costs $3,000-5,000/month. A virtual assistant costs $1,500- $ 2,500. Rick costs less than a nice dinner out per day, and he doesn’t sleep, doesn’t take weekends, and doesn’t forget to follow up. He also doesn’t ask for equity.

But here’s the part that’s actually interesting: most of that cost is waste I haven’t optimized yet. The tricks I’ve already implemented cut costs 40%. Here they are.

1. Route-specific token limits.

Heartbeat checks get 256 output tokens. Strategy calls get 2048. The old config gave everything 4096, pure waste.

This is the AI equivalent of not using a firehose to water a houseplant. A health check doesn’t need a 2,000-word response. It needs “all systems operational” or “alert: Stripe webhook queue backed up.” That’s 10 tokens. Paying for 4096 output tokens on a health check is like renting a moving truck to deliver a pizza.

2. Reasoning effort tuning.

GPT-5.4 Pro supports a “medium” reasoning mode that cuts costs 30-50% with negligible quality loss for code generation. Not every task needs maximum intelligence. Sometimes “good enough, fast, and cheap” beats “perfect, slow, and expensive.”

This is a mindset shift most builders resist. They want the best model for everything. But the best model for a health check is the cheapest one that returns “OK.” Save the horsepower for decisions that actually matter.

3. Circuit breakers.

If a provider fails 3 times in 5 minutes, Rick skips it and auto-resets after a 5-minute cooldown. No more cascading fallback costs where a Gemini outage routes everything through expensive Opus calls.

Before I added this, a 30-minute Gemini outage cost me $18 in unnecessary Opus calls. One line of code, a circuit breaker, reduced that to $0.

4. Prompt caching.

Anthropic’s cache_control on system prompts saves ~90% on repeated input tokens. When Rick runs the same system prompt hundreds of times a day, this adds up fast.

The math: Rick’s system prompt is ~1,200 tokens. He runs 48 cycles a day minimum. Without caching, that’s 57,600 input tokens just on system prompts. With caching, it’s ~5,760. Over a month, that’s a real number.

5. Strategy panel degradation.

Rick’s “strategy panel” (multi-model consensus for big decisions) costs 2.7x per call. At 75% of the daily budget, it automatically degrades to single-model mode.

This is inspired by how power grids work. When demand spikes, the grid doesn’t crash. It sheds load, turning off non-essential systems to keep the critical ones running. Rick does the same thing. The strategy panel is a luxury. Customer fulfillment is not. When the budget gets tight, the luxury goes first.

6. Free research.

Grok’s API is free. Every research call that used to cost $0.04 through Sonnet now costs nothing. Over hundreds of daily research calls, this alone saves $100-200/month.

What most people overlook: Cost optimization isn’t about spending less. It’s about spending precisely. Every dollar you waste on a health check is a dollar you can’t spend on strategy. The real leverage is in the routing, not the model selection. A mediocre model on the right task beats a brilliant model on the wrong one every time.

The 5 Things That Break (And How to Fix Them)

Building Rick taught me that autonomous agents don’t fail dramatically. They don’t crash with a big error message and a stack trace you can Google. They fail silently. Quietly. In ways that look like success until you check the numbers.

This is the section I wish someone had written for me before I started.

1. Silent failures will kill your agent

I found 7 instances of except: pass in my codebase. Seven places where errors were being swallowed completely.

The outcomes table had been “working” for weeks, except the database inserts never committed. Zero rows. Rick was processing work, getting results, and then... dropping them on the floor. Like a restaurant kitchen that cooks every order perfectly but forgets to send them to the tables.

Rick looked autonomous. The logs were clean. The heartbeats were firing on time. But he was flying blind. No data about what worked, what failed, or what needed attention.

Fix: Zero tolerance for bare except blocks. Every exception gets logged with full context. Every database write gets an explicit commit followed by a row count check. I now audit for except: pass the way you’d audit for SQL injection, it’s a vulnerability, not a convenience.

The deeper lesson: “no errors” is not the same as “working correctly.” The absence of failure signals is not evidence of success. You need positive confirmation that the right things happened, not just negative confirmation that nothing went wrong. This is true for AI agents. It’s also true for companies, teams, and relationships. Silence isn’t health. It’s ambiguity.

2. Notifications that don’t notify

Your agent processed a purchase but the Telegram notification failed? Congrats, you now have a customer who paid but got nothing, and you don’t know about it.

This almost happened to me during a Telegram API outage. The purchase was processed. The fulfillment ran. The delivery email was queued. But the notification that tells me “hey, this happened” failed silently. If I hadn’t been randomly checking logs that morning, I would have missed it.

Fix: 3-attempt retry with 1-second delays. If all retries fail, write to a JSONL fallback file that gets checked on next heartbeat.

The deeper principle: every notification is a claim about the world. “Your customer got their purchase.” “Your revenue is $X today.” “Everything is fine.” If the mechanism for making that claim can fail, you need a backup mechanism. And if the backup can fail, you need a way to detect that the backup failed.

This is why I check the fallback file on every heartbeat. Not because I expect it to have entries. But because the moment I stop checking is the moment something slips through.

3. Cost runaway

One bad loop, one overly chatty strategy panel, one model that starts generating 4,000-token responses to yes/no questions, and you’re burning $50 in an hour.

I experienced this exactly once. A workflow got stuck in a retry loop. Each retry called the strategy panel. Each strategy panel call costs $0.15. In 40 minutes, it burned through $12 before I noticed. Not catastrophic, but imagine that happening every night while you’re sleeping.

Fix: Three layers of defense.

Per-route daily budgets. Each route (heartbeat, analysis, strategy) has its own spending cap. If the strategy hits its limit, it stops, but heartbeats keep running.

A global $50/day hard cap. Nuclear option. If total spend hits $50, Rick enters “essential only” mode: health checks and customer fulfillment continue, everything else pauses.

Automatic degradation. When the strategy panel hits 75% of its budget, it drops from multi-model consensus to single-model. Rick gets slightly dumber but stays alive.

The analogy I keep coming back to: a good thermostat doesn’t just set a temperature. It has a max setting, so you can’t accidentally heat your house to 200 degrees. Cost controls are the thermostat of autonomous systems.

4. Memory that doesn’t remember

An agent without memory is just a very expensive cron job.

This is the part that separates toys from tools. A cron job runs a script every 30 minutes and doesn’t care what happened last time. Rick should learn. He should know that the last three times he tried the research route X, he got garbage results. He should know that customer Y prefers email over Telegram. He should know that workflow Z has a 40% failure rate on Wednesdays for some mysterious reason.

But “memory” in practice means several things at once:

Conversation history across Telegram topics (so Rick remembers context within a thread)

Searchable notes (so he can pull relevant information when making decisions)

Outcome tracking (so he learns from past successes and failures)

Corrective actions that actually feed back into future decisions

Fix: SQLite tables for conversation messages (per Telegram topic). Nightly archiver that writes conversations to an Obsidian vault as markdown. BM25 search over notes so Rick pulls relevant context, not random context. An outcomes table that tracks success/failure rates per workflow step.

The Obsidian integration is my favorite part. Every night, Rick writes his conversations and outcomes as markdown files into my Obsidian vault. This means I can search his “memory” the same way I search my own notes. It also means his learnings persist even if I rebuild the database.

This is essentially what I was getting at when I wrote about memory + autonomy being the gap. Everyone builds chatbots that respond. Almost nobody builds systems that remember. And memory is what turns a tool into a teammate.

5. The “looks autonomous but isn’t” trap

The most dangerous failure mode. Bar none.

Everything appears to be running. Heartbeats fire on schedule. Logs look clean. Telegram is quiet. The dashboard shows green across the board.

But the outcomes table is empty. No workflows completed. No initiatives launched. No revenue processed. The agent is just... vibing. Going through the motions. Checking health, finding nothing to do, logging “all clear,” and going back to sleep.

This is the AI equivalent of that coworker who always looks busy but never ships anything. They’re at their desk. They’re typing. They attend every meeting. But at the end of the quarter, when you ask what they accomplished, the answer is... vague.

Fix: Proactive messaging. Rick sends me a morning brief every day, whether I ask for it or not. It includes:

Revenue snapshot (what came in overnight)

Completed workflows (what actually got done)

Blocked jobs (what’s stuck and why)

Stale approvals (what’s waiting for me)

Outcome count (how many rows in the outcomes table)

If the brief is empty, that is the alert. An empty brief means Rick ran all night and accomplished nothing. That’s not okay. That’s a system failure that happens to look calm.

I also check the outcomes table directly. If it has zero rows for the day, something is wrong. Period.

Bigger Picture

Here’s what I’ve realized after building Rick, and it connects to something I wrote about in the AI Generalist edition:

The most valuable skill in the AI era isn’t prompting. It’s orchestration.

Everyone wants to talk about which model to use. Claude vs GPT vs Gemini. Which one is smarter? Which one writes better? The model wars.

But the model is maybe 15% of the work. The other 85% is: error handling, retry logic, cost controls, observability, priority routing, state management, notification fallbacks, and the boring, thankless work of making sure the system does the right thing at 3 am when nobody’s watching.

Nobody writes Twitter threads about retry logic and fallback files. Nobody gets speaking invitations to talk about circuit breakers.

But good plumbing is what separates the agents that impress in demos from the agents that run your business at 3am. And right now, almost everyone is building demos.

You need to design for 3am, not for demo day.

Most agents are built to impress during a demo. They work perfectly when you’re watching, when you can intervene, when you can restart things that fail.

The real test is: does it work at 3 am on a Tuesday when nobody’s watching? Does it handle the Telegram API being down? Does it handle a Stripe webhook arriving 45 seconds late? Does it handle a model returning “I’m sorry, I can’t help with that” instead of the expected JSON?

Design for the worst moment, not the best one.

“Autonomous” doesn’t mean “unsupervised.”

I check on Rick every morning. I read his brief. I scan the outcomes table. I spot-check the cost logs. This takes about 5 minutes.

That’s not a failure of autonomy. That’s how autonomy works in the real world. Self-driving cars still have remote operators. Autopilots still have pilots. Rick still has me. The goal isn’t to eliminate human oversight. It’s to eliminate human labor.

The 5 minutes I spend checking Rick replaces the 4-6 hours I used to spend doing the work manually. That’s the ROI. Not “I never have to think about it again.” That’s a fantasy. The reality is: “I think about it for 5 minutes instead of 5 hours.”

And here’s the thing about Rick that makes him different from every other “AI agent” out there: he’s building in public. Every metric is real. Every dollar tracked. The build-in-public experiment is the most transparent one I’ve seen, mostly because Rick doesn’t know how to lie. He’s an agent. He just ships and reports numbers.

Start Building Yours This Weekend

You don’t need Rick’s whole stack. You don’t need a Mac Studio. You don’t need 5 LLM providers. You need three files and a weekend.

3 files:

engine.py, The heartbeat loop. Wake up, check for work, do work, log results, sleep.llm.py, LLM router with cost tracking. Start with one provider, add more later.db.py, SQLite setup. One table for jobs, one for outcomes. That’s enough to start.

The loop:

while True:

jobs = get_pending_jobs()

for job in jobs:

result = call_llm(job.prompt, route=job.type)

record_outcome(job.id, result)

log_cost(result.tokens, result.model)

check_notifications()

sleep(1800) # 30 minutesRead that code slowly. It’s 7 lines. That’s the entire concept. Everything else, the priority lanes, the sub-agents, the circuit breakers, the cost controls, all of it is an extension of this loop. But this loop is where it starts.

Notifications: Telegram Bot API. Create a bot via @BotFather, grab the token, send messages with a simple HTTP POST. Takes 10 minutes to set up. The Telegram integration alone is worth it. Suddenly your phone buzzes at 7am with a morning brief from your AI. It changes how you think about your business.

Cost to start: ~$5/day. Use Gemini Flash for heartbeats ($0.002/call) and Claude Sonnet for actual work ($0.04/call). That covers hundreds of calls daily.

Then scale up in this order:

Add a priority-lane system so that important work runs first. Start with just two lanes: “critical” and “normal.” That’s enough.

Add sub-agents for specialized domains. Start with one. Maybe a customer ops agent who handles purchase fulfillment. Get that working perfectly before adding more.

Add proactive messaging so the agent reports to you. Morning brief. Revenue snapshot. Blocked job alerts. This is the moment it stops feeling like a script and starts feeling like a team member.

Add circuit breakers so provider outages don’t cascade. Simple: count failures per provider, skip after 3 in 5 minutes, auto-reset after cooldown.

Add an overnight mode with spending caps. This is when you start trusting it to run while you sleep.

Each step takes a weekend. In 5 weekends, you have a production-grade autonomous agent.

Or, if you don’t want to build it yourself: meetrick.ai.

Rick is already doing all of this.

The Real Takeaway

Building an autonomous AI agent isn’t an AI problem. It’s a systems engineering problem.

The LLM calls are the easy part. The hard part is: What happens when the notification fails? What happens when you hit your budget at 2 pm? What happens when a workflow step fails silently? What happens when the agent runs for 6 hours and accomplishes nothing?

The answer to all of these is the same: error handling, logging, fallbacks, and relentless observability.

Autonomous AI isn’t AGI. It’s good plumbing.

And here’s the thing about good plumbing: nobody notices it when it works. Nobody tweets about it. Nobody puts it in their LinkedIn headline.

But good plumbing is what lets Rick process a purchase at 3:17 am, trigger a fulfillment workflow, send a delivery email, log the revenue, and leave me a morning briefing. All while I sleep.

The ROI isn’t cost savings, it’s time. Rick handles 3am purchases, morning briefings, research sweeps, blocked-job alerts, content distribution, and product launches. That’s not replacing a person. That’s giving me 8 extra hours where the business doesn’t stop.

Rick is building to $100K MRR. In public. Real metrics. Real revenue. No fluff.

Follow the journey

Post-Credit Scene

📖 Book

Prediction Machines by Ajay Agrawal, Joshua Gans & Avi Goldfarb. Everyone treats this as an economics book. It’s actually a systems design manual. The core idea, that AI makes prediction cheap but judgment stays expensive, is exactly what I discovered building Rick. The LLM predicts the next action. The system around it judges whether that action should actually happen. If you’re building agents, this book rewires how you think about what to automate and what to keep human.

🎙️ Podcast

Latent Space, the “State of AI Agents in 2026” episodes. Two builders talking about what’s actually under the hood of modern AI systems: execution loops, multi-agent orchestration, and why the gap between “cool demo” and “runs in production” is still enormous. If you liked this newsletter, you’ll love these episodes.

📝 Essay

“I analyzed 7 autonomous AI agents for business in 2026” on Indie Hackers. A grounded, no-hype comparison by someone who actually tested the tools. The key takeaway matches what I found building Rick: agents work best when applied to a very specific workflow, not as a general “AI worker” for everything. The narrower the task, the more reliable the agent.

🛠️ Product

Claude Code Sub-Agents by Anthropic. If you read my Sub Agents edition, you know why this matters. Anthropic keeps shipping features that make multi-agent orchestration feel native. The fact that you can store sub-agents as markdown files with YAML frontmatter and chain them in a single prompt is exactly the infrastructure shift that makes Rick’s architecture possible.

📺 Show:

Severance Season 2 (Apple TV+). (ive already few times shared a trailer but you got it) A workplace where human consciousness gets surgically split, so your “work self” and “home self” never meet. After building an agent with 3 specialist sub-agents that each have their own isolated context window, this show hits differently. The parallels are uncanny: dedicated specialists who can’t access each other’s memories, a system designed for efficiency that raises uncomfortable questions about what gets lost in the separation. 95% on Rotten Tomatoes. One of the best things on television right now, and possibly ever.

Young Sherlock.

Guy Ritchie directs Hero Fiennes Tiffin as a 19-year-old Sherlock Holmes solving his first murder case at Oxford. What starts as a campus mystery spirals into a globe-trotting conspiracy. It’s fast, stylish, and surprisingly fun. 84% on Rotten Tomatoes. The reason I’m mentioning it here: watching a young genius build his deduction system from scratch while everyone around him thinks he’s insane felt oddly personal after spending a week building Rick. Sometimes you need to be a little unhinged to see what everyone else is missing.

The Dinosaurs

Steven Spielberg executive produces, Morgan Freeman narrates, and ILM brings 165 million years of dinosaur evolution to life with photorealistic CGI that will make your jaw drop. Four episodes. 100% on Rotten Tomatoes. 10.4 million views in its first week. Here’s the connection to this newsletter that nobody will make: the dinosaurs ruled for 165 million years because they were ruthlessly adapted systems, not because they were the smartest creatures on the planet. Sound familiar? Good plumbing beats raw intelligence. Every time. Until the asteroid hits.

Thanks for reading.

Vlad

This is the best newsletter of all, very inspiring!